The introduction of predictive metrics in Google Analytics 4 is a huge game changer for digital marketers. Instead of reports about past behavior of website visitors, Google’s Artificial Intelligence can now give you a glimpse of the future and a clear hint on where to put your marketing focus in the next week(s). Here is another prediction for you. Read the article and you will impress your clients or boss the next time they talk to you about GA4.

X Analytics

5 Use Cases of Data Matching

Data matching can be used to eliminate duplicate content or for various types of data mining. Data matching refers to the process of comparing two sets of acquired data. This can be accomplished in a variety of ways, but the process is frequently based on algorithms or coded loops in which processors perform sequenced analyses of each specific piece of a given dataset, pairing it against each specific piece of another data set, or contrasting complex variables such as strings for specific similarities. Data matching can be used to eliminate duplicate content or for various types of data mining. Many data matching efforts are made with the goal of establishing a critical link between two data sets for advertising, cybersecurity, or other applied reasons. Here are 5 common use cases…

What is AWS Neptune?

AWS Neptune is the cloud leader's entry among graph databases poised to drive next-generation recommendation, fraud detection, and other apps.

Your Data Supply Chains Are Probably a Mess. Here’s How to Fix Them.

Data is more important than ever, but most organizations still struggle with a few common issues: They focus more on data infrastructure than data products; data is often created with the needs of a particular department in mind, but little thought for the end use; they lack a common “data language” with each department coding and classifying with their own system; and they’re increasingly focused on outside data, but have few quality control systems in place. By focusing on “data supply chain” management, companies can address these and other issues. Similar to physical supply chains, companies should think systematically, focus on end products, define standards and measurements, introduce quality controls, and constantly refine their approach across all phases of data gathering and analysis.

Microsoft’s Kate Crawford: ‘AI is neither artificial nor intelligent’

Kate Crawford studies the social and political implications of artificial intelligence. She is a research professor of communication and science and technology studies at the University of Southern California and a senior principal researcher at Microsoft Research. Her new book, Atlas of AI, looks at what it takes to make AI and what’s at stake as it reshapes our world. You’ve written a book critical of AI but you work for a company that is among the leaders in its deployment. How do you square that circle?

Setting the record straight on NFTs, the most misunderstood financial advancement in history

Non-fungible tokens, or NFTs, offer businesses with tamperproof certificate of authenticity and ownership, but they need to be easier to use.

Three resources to help you understand today’s data and AI regulatory landscape

The data privacy and AI protection regulatory landscape seems to evolve on a constant basis. Almost three years ago, organizations were developing roadmaps for strong information and data governance programs to comply with the EU General Data Protection Regulation (GDPR). Shortly prior to this, businesses were facing the U.S. California Consumer Privacy Act (CCPA) and

Orphaned Analytics: The Great Destroyers of Economic Value

I’m overjoyed to announce the release of my latest book “The Economics of Data, Analytics, and Digital Transformation.” The book takes many of the concepts discussed in this blog to the next level of pragmatic, actionable detail. Thanks for your support!

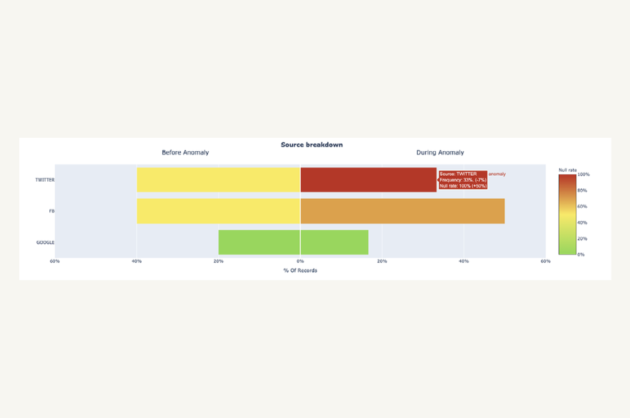

Root Cause Analysis for Data Engineers

The Definitive Guide5 essential steps for troubleshooting data quality issues in your pipelinesImage courtesy of Monte Carlo.This guest post was written by Francisco Alberini, Product Manager at Monte Carlo and former Product Manager at Segment. Data pipelines can break for a million different reasons, and there isn’t a one-size-fits all approach to understanding how or why. Here are five critical steps data engineers must take to conduct root cause analysis for data quality issues.

What analytics leaders need to know about graph technology

The massive data sets, complex processing capabilities and advanced analytical models in the current digital business landscape create the perfect storm of opportunity for data and analytics. After languishing for decades, graph approaches are being embraced by analysts, data scientists and data management professionals. Graph technology is a sort of catch-all phrase that includes graph theory, graph analytics and graph data management.

The Future of Sales Analytics Promises More Commercial Impact

The future of sales analytics brings with it a more accurate yet dynamic picture of buyer behavior and needs, which will drive significantly more commercial impact for frontline sales teams and commercial leadership than is typically offered by sales analytics today.

6 Web Scraping Tools That Make Collecting Data A Breeze

The first step of any data science project is data collection. While it can be the most tedious and time-consuming step during your workflow, there will be no project without that data. If you are scraping information from the web, then several great tools exist that can save you a lot of time, money, and effort.

Data Science and Artificial Intelligence Is Revolutionizing The Sports Industry

Data science is a principle of machine learning that uses several tools and algorithms to find patterns from raw data. This has become quite a buzz in the tech world

Contra wants to be the community that independent workers are missing

Whether you’re working on something new according to your Twitter bio, or self-employed, according to your LinkedIn bio, founder Ben Huffman thinks his platform, Contra, will be the best way for independent workers to explain and monetize what they are working on. Contra is a platform that wants professionals to create profiles that show project-based […]